Image science investigates the ways that image quality can be defined, measured and optimized; it touches and improves the visualization of everything from healthy bones to unstable atmospheres to millennia-old geological formations. This interdisciplinary field studies the physics of photon generation, the propagation of light through optical systems, signal generation in detectors and more, and considers the statistics of random processes and how they affect the information contained within images.

The faculty in image science at the Wyant College of Optical Sciences show particular strength in designing new technology for medical imaging, homeland security, earth sciences and other applications, and in developing new methods for assessing image quality by quantifying how accurately imaging systems can accomplish certain analytical tasks.

To view past updates, see the Research Updates Archive.

Image Science Faculty

David Brady

Lars R. Furenlid

Arthur Gmitro

Dongkyun "DK" Kang

Travis Sawyer

Research Labs in Image Science

- Biomedical Applied Imaging Lab (Assistant Professor Yeran Bai)

- Biomedical Optics and Optical Measurement Laboratory (Assistant Professor Travis Sawyer)

- Biomedical Spectroscopy and Interferometry Laboratory (Associate Professor Leilei Peng)

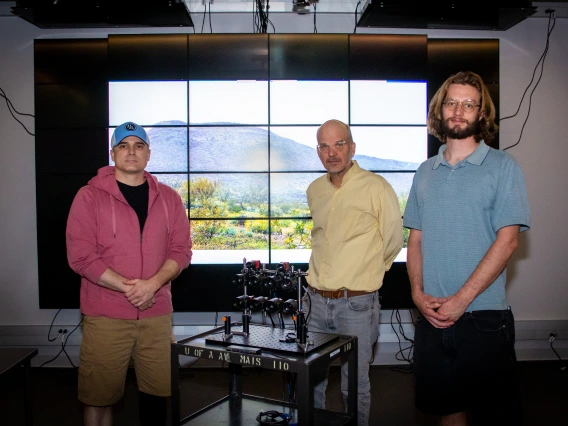

- The Camera Lab (Professor David Brady)

- Center for Gamma-Ray Imaging (Professors Lars Furenlid and Matthew Kupinski)

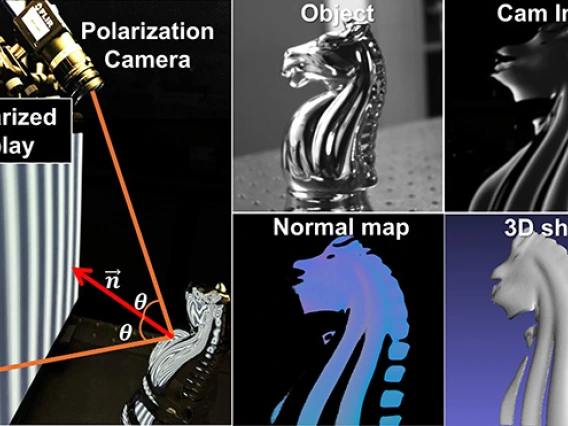

- Computational 3D Imaging and Measurement Lab (Associate Professor Florian Willomitzer)

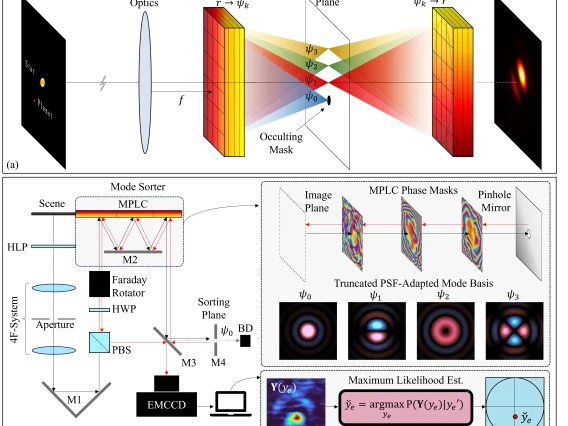

- Intelligent Imaging and Sensing Lab, I2SL (Professor Amit Ashok)

- Polarization Lab (Associate Professor Meredith Kupinski)

- Translational Optical Imaging Lab (Associate Professor Dongkyun "DK" Kang)